What does “Good Environmental Reporting” mean for air quality?

This article is written by Sarah Horrocks, Head of Air Quality & Emissions at AtkinsRéalis and Chair of the Insitute of Air Quality Management (IAQM). The article follows an IES cross-community event on what makes good environmental reporting, which brought environmental professionals together from across disciplines to explore what good reporting looks like for thier specialism. You can catch up on the event on our website.

Good environmental reporting is something we all care about and want to deliver. But what does it mean in practice? Air quality sits in a very interesting space. It’s technical, scientific, and regulated; but it’s also incredibly human. So, how we communicate our actions and findings matters just as much as the modelling or monitoring that goes on behind the scenes. Our reports shape planning decisions, influence policy and affect how communities understand what’s happening around them.

While we have many different end audiences for our reports, across the suite of IAQM guidance there’s a consistent emphasis on:

- Clarity: plain English, defined terminology, logical flow

- Transparency: assumptions explained, uncertainties justified

- Reproducibility: information for a competent person to repeat the assessment

- Proportionality: key messages in the main text, detailed work in appendices

These principles reflect what good reporting for air quality should achieve: clear communication, strong evidence and trust in the conclusions reached.

Make the complex accessible

So often, air quality reports aren’t for a single audience; they’re read by other technical disciplines, technical peers, clients, planners, regulators, contractors and community groups. Some need detail; others want reassurance and clarity.

Our reports are often full of acronyms, technical terms and legislative references. They’re familiar to us as practitioners, but they can easily make a report feel impenetrable to someone not involved in the field day to day. We need to make sure our reports are crystal clear: defining terms when they first appear, using language consistently, and avoiding unnecessary jargon. Bear in mind that plain English doesn’t mean dumbing things down; it simply helps to open the door to more readers. When people don’t feel they need a glossary glued to their hand, they’re far more likely to engage with the report contents.

Tell the story

A clear, logical structure is one of the first things to set out to help people understand your assessment process. This will not only make it easier for a competent person to repeat the work and understand how you same to your conclusions, but it also helps a non‑technical reader follow the thread. What’s the baseline situation? Where are emissions coming from? What methods did we use to come to assess significance? What did we assume, and why?

I’m also a big believer in the power of a strong executive summary. It’s often the first thing people read – and sometimes the only thing. If someone only has five minutes, that summary should tell them what the situation is, what you did, what you found, and what it means, in a way that’s accessible to non‑specialists.

Paint the picture

Air quality assessments can be very dense, with multiple time dimensions, pollutants with different behaviours, receptor types, effects on a local and regional scale. My advice is for the main report to focus on the key messages; the heavier technical detail can sit in appendices.

Visual aids (tables, charts, maps and diagrams) are hugely important, but make sure these are good quality with clear labelling, otherwise they can hinder rather than help. Interactive dashboards, story‑map style reports and better visualisation tools will help people follow assessments, instead of feeling overwhelmed by 200 plus‑page PDFs.

Assumptions and uncertainty

Uncertainty is inevitable in our work, which involves multiple data sources and assessment techniques and scenarios. Expectations on transparency are increasing, and being clear and upfront about assumptions, data gaps, sensitivity testing and limitations builds trust. If there are different phases of development, justify your decision on which ones have been assessed and why. If you have multiple monitoring sites for a model verification, justify your final selection. It is far better to acknowledge the uncertainties than to give a false sense of confidence in the data.

Quality assurance underpins everything

Have you heard about the Swiss Cheese model?

A self-check of what you’ve written is important, but independent quality checks are essential, and this stage should never be rushed or skipped. To help your reviewer, clearly document your inputs and assumptions for a more thorough and efficient process. This will make the difference between a credible report that stands up to scrutiny, and one that raises unnecessary questions.

There are plenty of examples where poor quality assurance (QA) has changed how results were interpreted: for instance, routine monitoring showing exceedances that later turn out to be an artefact of poor siting of equipment. The issue wasn’t the air quality: it was the reporting (or lack of it). When QA is clear from the outset, those misunderstandings simply don’t arise.

Frequent communication between topic leads, shared datasets, aligned assumptions, and a final cross-check are also key.

What’s in the future?

Environmental reporting requirements are likely to change, as will how we produce our assessments. Things are moving ahead with:

- The use of AI to support large dataset processing and automated reporting

- More visual, interactive reporting in digital format using GIS story boards

Regardless of how technology changes, one thing is clear: a well‑structured, transparent and genuinely accessible report will support better decisions, lead to more informed public engagement and, ultimately, result in better outcomes for health and the environment.

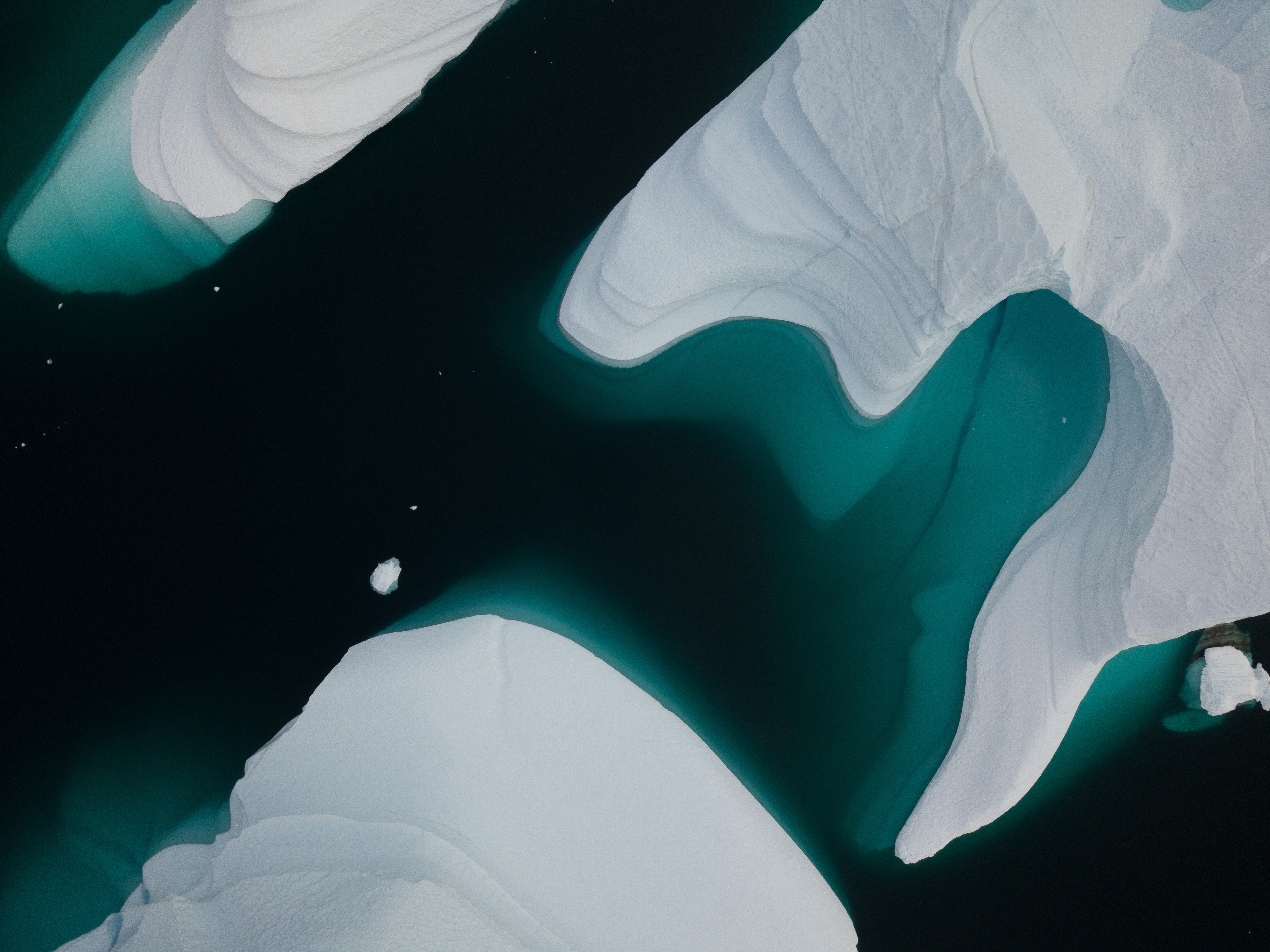

Header image credit: © Pituk via Adobe Stock